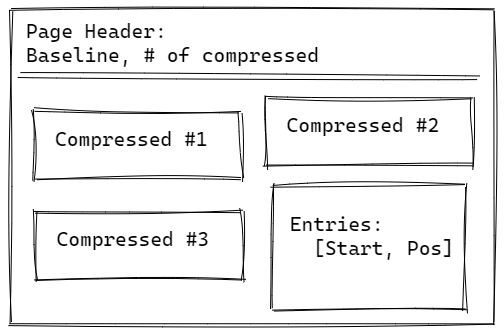

We looked into the internal of Corax’s posting list and in the last post I mentioned that we have a problem with the Baseline of the page.

We are by no means the first people to work with posting lists, and there is a wide body of knowledge on the topic. When it came time to figure out the compression format for our posting list, we used the PFOR format (Patched Frame of Reference). It, like pretty much all other integer compression methods, uses 32 bits integers. Corax utilizes 64 bits integer as the document ids, so that was a problem. We solved that problem by using a Baseline for each page. In other words, each page would be able to contain values in a range of 2.1 billion of one another. That is a very reasonable range, given that a page is 8KB in size.

There was a problem as we built more features into Corax. The posting list needed to store not just the document id, but also the frequency of the term in the source document. It turns out that we need 8 bits to do so, and we already have 64 bits range so… Instead of creating another location to store the frequencies, we put them directly inside the posting list. But that meant that we reserved a range of bits. We have 64 bits overall, so not a big problem, right? Except that on a page basis, we have a lot less. Before, a page could contain a range of 2.1 billion, but we reserved 10 bits (frequency and tag, the details of which are not important to our story) and we ended up with a range that is 4 million per page. That little tidbit meant that we could only store in a page items that were within 4MB of one another. And that was a problem. Whenever we had a posting list where two values would be more than 4MB from one another, we would need to split the page. And since the posting list and the entries live on the same file, having more page splits means that entries are now further apart.

Here is an example of what this looks like:

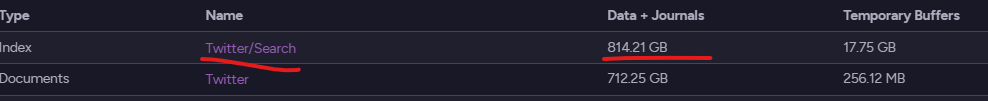

The index is taking more space than the source data, and most of that is used to store… nothing, since we ended up with a very wide posting list containing very few entries. One of the cases of two independent issues compounding each other very quickly.

So we changed things again, instead of limiting ourselves to 32 bits range per page, we changed the PFor format to allow for 64 bits integers directly. Once again, that leads to simplification in the codebase and has greatly reduced the amount of disk space that we are actually using.

To give some context, here is the current amount of disk space taken by the same entity that previously took 800+GB:

The problem wasn’t with the number of entries, but that each entry would consume 8KB of disk space on its own, and in the image, you are seeing the number of posting lists, not the number of posting lists entries.